Pitch Perfect: Behind the Scenes of Building an AI Elevator Pitch Coach

When I first started sketching Pitch Perfect, I wasn’t trying to build another chatbot. I was job searching and wanted to see if AI could actually coach me and prepare me for the job interviews. Not just answer questions though, but listen, reflect, and help refine the most human thing we get to do with the interviewers: talk about ourselves.

Those experiences became the seed idea for Pitch Perfect, an AI elevator pitch coach designed to help learners and professionals craft a clear, confident 60-second pitch.

🎬 Watch the early Voiceflow prototype:

Voiceflow Demo (Video)

From Idea to Prototype

I started where all good experiments begin, with a rapid prototype.

The first version lived inside Voiceflow, a no-code conversation builder that let me map out tone, flow, and user feedback loops visually. Voiceflow was perfect for early testing: I could simulate voice and text responses, design conditional branches (“too long,” “too vague,” “too buzzwordy”), and see how users moved through the experience.

But as the design matured, I hit limits. I wanted memory, dynamic scoring, and feedback personalization. I also wanted to break free from subscription costs that scale with every test user.

So I decided to rebuild it from the ground up, in code. 👩💻

The Development Environment

The rebuild took place in Replit, a lightweight coding lab where I could experiment, debug, and test everything live in one place, with the assistance of ChatGPT Plus (subscription strength model).

Core Stack:

- Python — handled the main application logic

- LangChain — orchestrated the conversation flow, prompt templates, and memory management

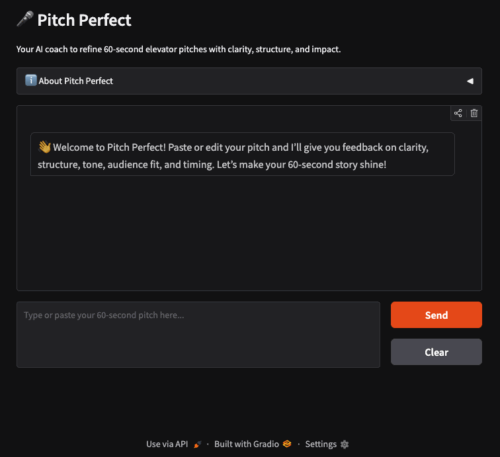

- Gradio — provided the chat interface where users interact with the coach

Each layer served a learning purpose: LangChain taught me how to structure conversations programmatically while Gradio helped me translate code into a usable, human interface.

Swapping the Brain

Phase 1 ran on OpenAI’s GPT-4o, which powered live API calls for natural, adaptive responses during development. It was smooth, but the more I tested, the more I realized I’d hit the same problem again: API costs add up.

Enter Phase 2.

I redeployed Pitch Perfect on Hugging Face Spaces, this time using Groq’s free tier, which runs Llama 3.1 8B Instant. It’s lightweight, responsive, and most importantly, free to host for demos. The swap taught me how to configure different LLM backends while keeping the same LangChain-Gradio structure intact.

🚀 Try the live demo here:

Pitch Perfect: AI Elevator Pitch Coach on Hugging Face

What I Learned

Every phase of Pitch Perfect pushed my understanding of AI prototyping forward:

- Voiceflow showed me how to design conversational tone and flow visually.

- LangChain revealed the power of chaining logic, prompts, and memory.

- Gradio bridged AI logic to user experience in real time.

- Groq + Hugging Face introduced open hosting and inference tuning for public demos.

It’s not a product yet. It’s a learning artifact, a working demo of how designers can build AI experiences that coach rather than lecture. It also complements the work of a human job or career coach.

Why It Matters

The real value of Pitch Perfect isn’t just the prototype. It’s the process, a microcosm of what AI-native learning design looks like. It blends scripting, human-centered design, model orchestration, and interface prototyping all in one.

I learned immensely by building from scratch—writing Python, debugging in Replit, and designing a true end-to-end experience. I wanted to move beyond relying on ChatGPT to make a chatbot, and instead understand how one truly works.

In a world obsessed with prompt engineering, this project reminded me that design still comes first. You can’t engineer a meaningful conversation if you haven’t imagined the experience of being heard. 🤓